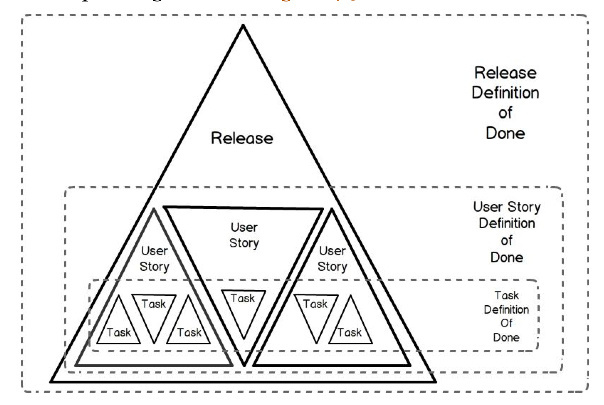

Obviously having a DOD is a requirement for any Scrum team and it fosters good practices in a team as well. But thinking we are “done” just because we met acceptance criteria doesn’t seem… I don’t know… Agile?

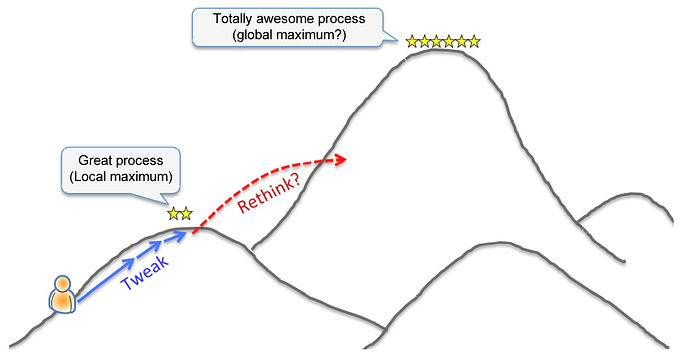

As we know, a software product is never actually done and any feature or story we get to a “done” state could potentially be improved on through inspection and adaption from the team, stakeholders, and end users. My question is, how can we shift the mindset that many orgs have as “This has met the DOD so it’s done” to "Lets inspect this increment of work and see if it can bring more value or a better experience for our customers.

An observation:

The sprint review by some as a demo of “done” work to be signed off on by stakeholders instead of what it’s supposed to be which is a meeting to review potentially shippable software and inspect and adapt. The output of the sprint review is supposed to be a “REVISED product backlog.” Not… “These things have met the DOD, and now we are 35% complete to our release”

Thoughts?